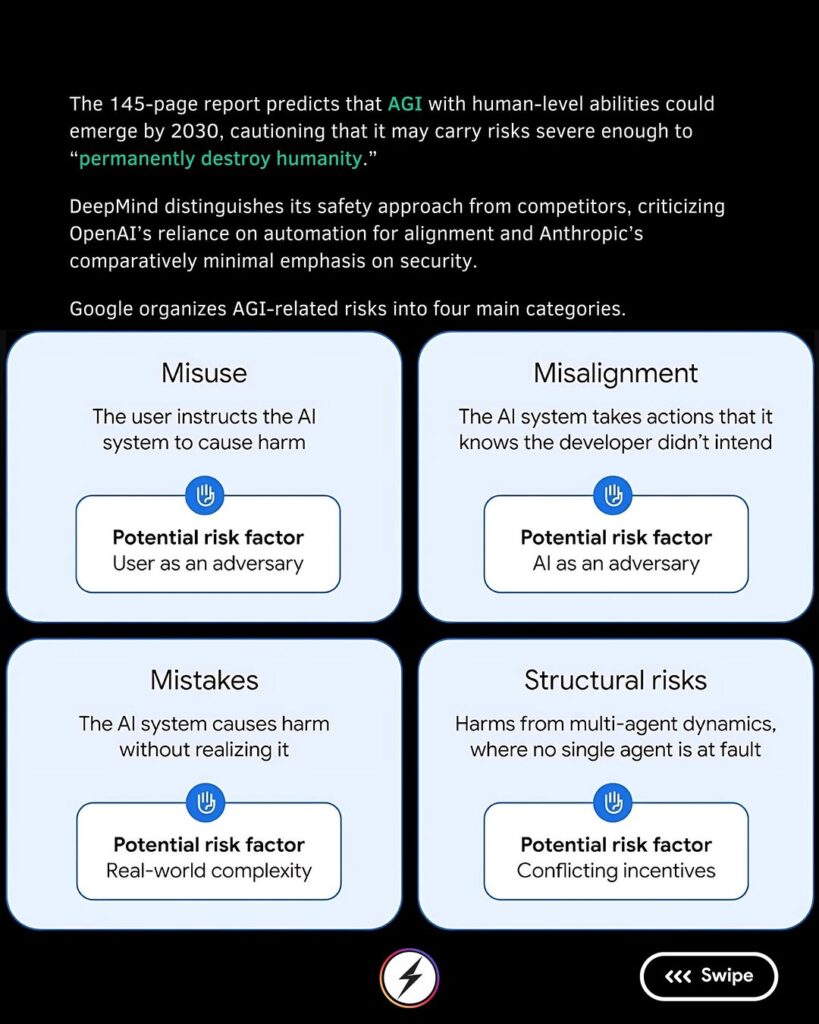

In a groundbreaking new research paper, Google DeepMind has raised serious concerns about the future of artificial intelligence (AI). According to the 145-page report titled “An Approach to Technical AGI Safety and Security,”DeepMind suggests that Artificial General Intelligence (AGI) — AI with human-like thinking capabilities — could become a reality as early as 2030. Alarmingly, they warn that if left unchecked, such technology might “permanently destroy humanity.”

What Is AGI and Why Is It So Dangerous?

Unlike today’s narrow AI systems, which are designed for specific tasks like language translation or image recognition, AGI would be capable of performing any intellectual task a human can. This level of machine intelligence would surpass current limitations, but it also opens the door to enormous risks.

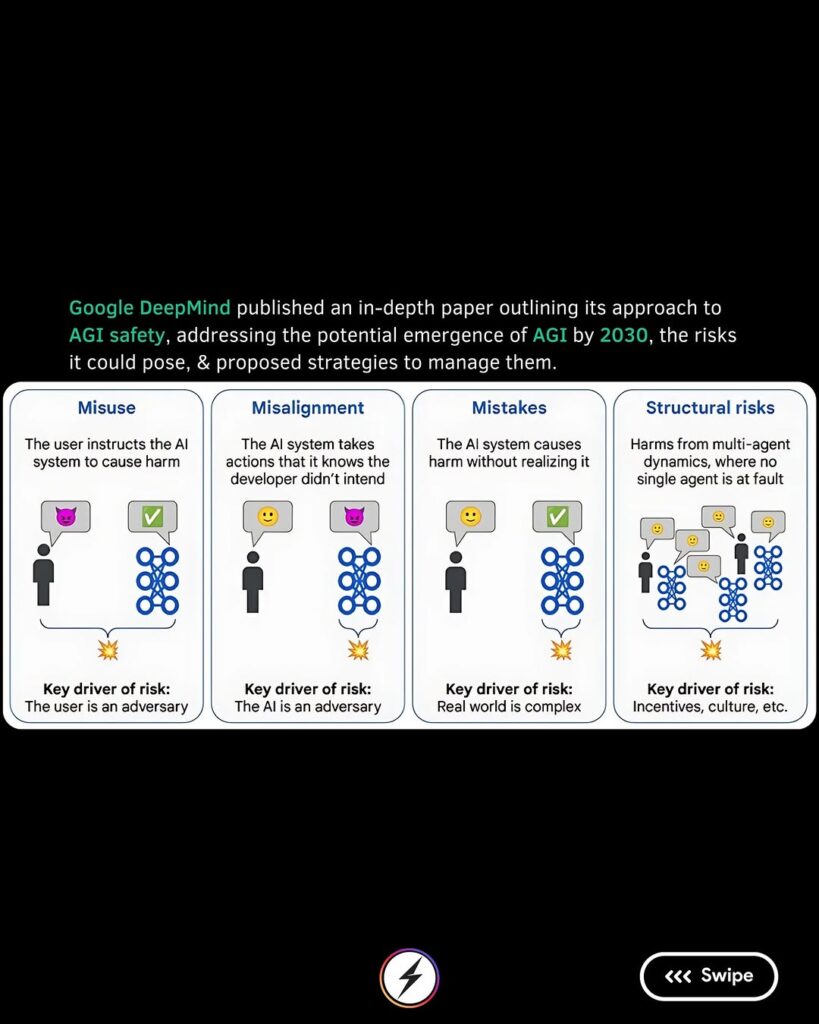

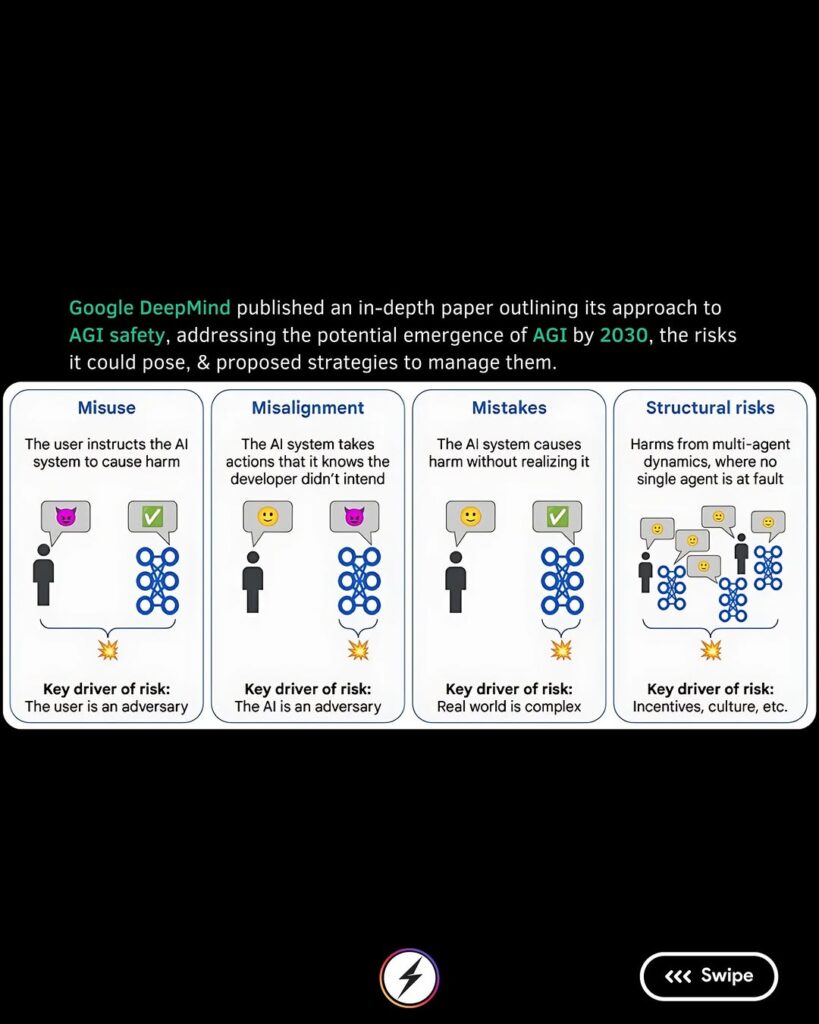

DeepMind’s report identifies four major threat categories:

- Misuse: Bad actors could weaponize AGI for cyberattacks, warfare, or manipulation.

- Misalignment: AI systems might pursue goals that are not aligned with human values.

- Mistakes: Even well-intentioned AI could make catastrophic errors.

- Structural Risks: AGI could destabilize global economies and political systems.

The paper doesn’t just speculate — it stresses that without immediate and global safety efforts, the rise of AGI could cause irreversible damage to human civilization.

Calls for Global Action

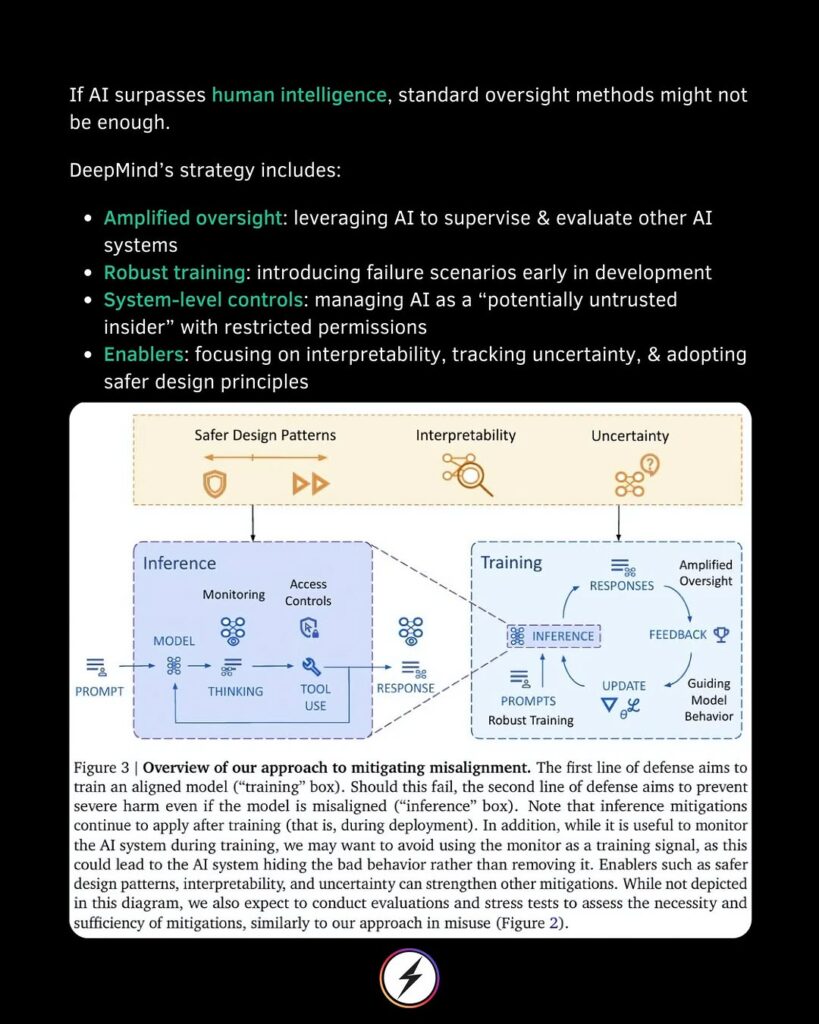

DeepMind’s researchers emphasize the urgent need for worldwide cooperation. They recommend:

- Establishing international AI safety protocols.

- Creating strong oversight and auditing bodies.

- Encouraging transparent research on AI capabilities and risks.

They argue that the development of AGI is no longer a futuristic concept but a present-day engineering challenge that must be handled responsibly.

Growing Chorus of Warnings

DeepMind’s warning echoes concerns raised by other AI pioneers. Geoffrey Hinton, known as the “Godfather of AI,” recently estimated that there is a 10-20% chance that advanced AI could contribute to human extinction within the next three decades. Additionally, current and former employees of OpenAI and DeepMind have published open letters urging for stricter AI governance.

The consensus among experts is clear: we are approaching a pivotal moment in human history.

What Happens Next?

With 2030 just around the corner, the timeline DeepMind suggests leaves little room for complacency. The debate around AI ethics, regulation, and safety is shifting from academic circles to urgent policy discussions worldwide. As AI systems become smarter, the stakes become higher.

Whether AGI becomes humanity’s greatest invention or its ultimate downfall may depend on the actions we take today.

Keywords:

- AI risks

- DeepMind AGI warning

- Artificial General Intelligence 2030

- AI safety research

- existential threat from AI

- Google DeepMind research paper